In 2024–25, I was fortunate to get a front-row view of choices facing artists who seek to engage AI in a photography-based practice. I don’t mean artists who are merely seeking a new tool kit, but rather those adopting aspects of AI to examine the future and the identity of photography itself. While guestediting an issue of the journal Photography and Culture and contributing to a special issue of Aperture, both on the relationship of AI to photography, I learned from my collaborators that there are two main paths currently open. And while those paths appear divergent for now, they may, thanks to rapid changes in the field, soon intersect to form a complex mesh of possibilities.

Widespread anxiety arose about AI’s incursions into creative work in late 2022, when ChatGPT and generative image AI tools like DALL-E and Midjourney became widely accessible. But for a significant group of artists, AI wasn’t new. Whether identifying as photographers, new media artists, or conceptualists focused on the socio-politics of technology, some artists had been exploring AI as both subject and method for years. American artist Trevor Paglen is perhaps the best known amongst these. For more than a decade, his practice has combined photography, appropriated archives and datasets, and purpose-built AI models in projects that graphically expose the human biases informing computer vision, especially in the labelling of photographs for machine-learning training sets and the development of algorithms for interpretating images.¹

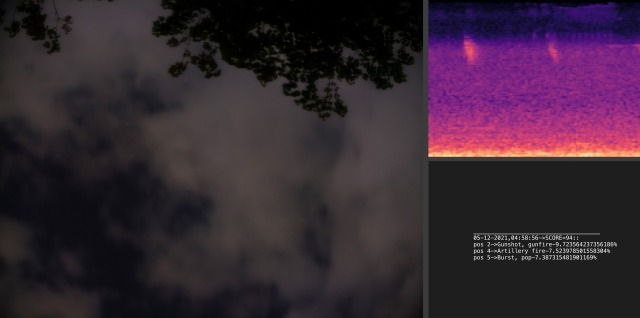

Paglen’s work is the template for what I’ll call the tech-jamming approach. This path can only be taken by artists with the know-how to train AI models and build custom systems, but it is defined more by goal than by technique. Typically, the objective is to flip the technology back on itself in a subversive or critical way, or to query the ethical implications of AI applications that increasingly pervade everyday life. Josh Azzarella’s project Untitled #310 (2021), published in our special issue of Photography and Culture, is a compelling example. Using a free database of labelled sounds, the artist trained an AI model to recognize the acoustic fingerprint of a gunshot (akin to the ShotSpotter™ technology deployed by many police departments); when the sound of “gunshot” was identified by the AI it immediately triggered a skyfacing camera to capture an image. When exhibited, each work in the series is composed of three different representations of the same data, evoking Joseph Kosuth’s famous conceptual One and Three works of the 1960s: the photograph of the sky, the spectrogram of the triggering sound, and the readout of the sound’s classification (“gunshot, gunfire”) along with the AI’s threshold data for that identification. “Unlike private surveillance devices utilized for public safety,” writes Azzarella, “Untitled #310 is transparent about its failures and its images openly indeterminate.”²

Untitled #310 highlights how AI’s “reliance on algorithmic recognition introduces the potential for misinterpretation” – a potentially deadly consequence in the growing number of surveillance, drone, and facial recognition systems that make identifications, draw conclusions, and execute actions through artificial intelligence. Simultaneously, the project poses questions about how AI will challenge fundamental definitions of the photograph. Photography’s reputation as truthful witness derives from its status (however contested) as indexical; the photograph, as a sign or representation, is understood to be caused by the very thing it pictures. What kind of evidence will the photograph become when initiated by the non-human trigger of algorithmic determination? What does that picture represent, relative to the (unreliable) phenomenon that caused it and the (potential) violence it cannot record?

The tech-jamming approach is, at least for now, likely to result in artworks more conceptual than pictorial. The product might be an oozing visualization of statistical patterns, a series of glitchy images rendered by algorithms operating on limited datasets, or a torrent of prompt-generated pictures intended to distort the data pool associated with a word or proper name.

The second approach, one whose possibilities for photographers and artists is growing daily, is one I’ll call “co-creative.” When diffusion-based AI models became available as consumer products, capable of generating images from a text prompt that can pass for camera-made photographs, commentary focused on whether AI threatened to “replace” photography and whether Capture 2026 10its “photoreal” outputs constituted fraud. Photo artist and AI engineer H. Rashed Haq argues such a binary distinction is already outdated, given that the computational photography of smartphone cameras already uses AI enhancements to “pre-process” our every captured image. Photography, at least digital photography, is better understood as a technological spectrum in which generative AI presents a dramatic extension. Haq calls this moment the advent of “computational creativity.”

Computational creativity may be in early stages but already presents rich possibilities for visual expression within a realm still recognizable as photographic – meaning expression that calls on photographic aesthetics and their associations to make its point. Haq identifies several emerging modes: perceptual realism (deploying traditional photo aesthetics to assert the existence of something that was not or could not be photographed), stochasticity (exploiting the role of chance), multi-modality (combining visual and text inputs and outputs), combinatorial creativity (algorithmic blending of diverse elements or styles), and most expansively and provocatively, “the ability to photograph the imagination.” Several are combined in Haq’s ethereal rendering of the construction of St. Paul’s Cathedral, a pre-photography edifice, with Easter-egg anachronisms embedded in the composition. Transcending the limitations of time and space, he argues, generative AI allows artists to render dreamed landscapes, abstract concepts, or surreal scenes “with precision and creativity.”³ We may be tempted to protest the imaginary is not photography’s proper subject, but recall that artistic advances in photography have always been driven by efforts to make the medium convey ideas and values beyond the visible.

Although early outputs leaned to gimmick, several recent projects demonstrate computational creativity’s powerful promise. In just one notable sector of contemporary practice, it provides the means to alter the past or speculate futures for racialized subjects who’ve historically experienced photography as violence or erasure. Minne Atairu, featured in the Aperture special issue, works with Midjourney to generate AI-photographic images of blue-Black beauty, harnessing the aesthetics of Afrofuturism to a critical exploration of AI’s limitations. Her results reveal that algorithms are trained to associate “beauty” with lighter-skinned complexions, and point out how gaps in underlying datasets make prompts like “Black woman with blond braids” nearly impossible to render. More poignant is Igùn (2025–), her series in which a custom AI model is trained on archival photographs of the Benin Bronzes to realize, via 3D printing, a continuance of her ancestral homeland’s artistic legacy uninterrupted by colonialism and looting.⁴ Atairu’s art suggests that much-needed critique of AI operations and datasets can be compatible – even in a single artist’s practice – with the technology’s co-creative capacities to summon new and alternate realities.

Atairu’s mix of conceptual goals and varied AI tools points to the imminent future. As generative-image AI continues to evolve, Haq points out, users in 2026 no longer need to know how to code from scratch; they can use coding assistants like Cursor AI to generate their own AI models, which can now be trained on consumer-grade hardware without sophisticated cloud-storage resources (unimaginable just two years ago). An increasing number of tools also enable local customization of massive “frontier” models like Midjourney for individualized aims. These developments expand the tool kit and potential output formats for all artists – including those who take tech-jamming or socio-critical approaches to AI as a subject. Prompting, meanwhile, becomes an ever more important and nuanced skill for wielding models effectively, putting speech on par with pre-visualization in this newly elastic version of photography. For photojournalism, political messaging, and advertising, among the many fields where we count on photographic aesthetics to assure us of facts, there’s ample cause for caution. But for artists pushing toward photography’s creative future, the horizon is boundless.